Overview

I have some fairly common and reasonable goals for the use of my laptop:

- run Linux

- extend the display to a second monitor

- a couple of hours of battery life when unplugged

- easy to configure with minimum hassle

- a good balance of display performance for normal daily work activities when the laptop is plugged-in – without excessive heat and the CPU or GPU fan spinning on high making a lot of noise when the simplest action of moving the mouse is performed

- ability to use the high performance of the Nvidia GPU (e.g. for Data Science) without being forced to reboot or log off/on.

Laptop Specifications and Reality

My laptop is marketed as a ‘gaming laptop’ with a relatively power efficient 15.6” Sharp IGZO 4K display, an Intel 7th Gen Kabylake i7-7820HK, 32GB RAM (expandable to 64GB) and an embedded 4-cell 60Wh battery.

With a desire to run Linux (which regardless of this GPU discussion is known to be challenged regarding its power saving) I don’t expect this 4-cell battery to last all day… or even half a day while running a minimal load! However, I know from experience that I can squeeze at least a couple of hours of battery life out of the laptop when running a minimal load on Windows.

Windows has Full Control of the Nvidia GPU

Windows has the ability to fully utilise Nvidia Optimus technology, use the Integrated Intel Graphics for rendering and completely shut off the Nvidia GPU. However, when necessary (for example, when doing anything graphically intensive such as gaming), Windows will dynamically and seamlessly switch to using the Nvidia GPU for rendering. It can do this because the Nvidia drivers for Windows support this on-the-fly muxless switching functionality.

Being able to dynamically shut off the Nvidia GPU saves the battery considerably!

On this laptop, the display ports are wired directly into the Nvidia card, so all operating systems are forced to turn on the Nvidia GPU in order to extend the display to another monitor. But when that second monitor is unplugged, Windows can immediately and seamlessly shut off the Nvidia GPU and save power.

Linux Doesn’t :(

The proprietary Nvidia drivers for Linux however do NOT provide the same switching capability. In fact, the historical and current state (as at the year 2018) of using various proprietary and community-hacked open-source drivers on Linux is quite simply abysmal and confusing as hell. Valiant efforts such as Bumblebee, Nouveau and nvidia-xrun are in various states of disrepair - whether it is a performance issue or lacking support for modern graphics libraries such as Vulkan. All of them suffer from usability issues. Unlike Windows users, why should Linux users be forced to reboot their machine in order to turn on/off the Nvidia GPU? Why should we need to run different X sessions?! Why can’t the same hardware work seamlessly like in Windows – powering up the GPU automatically and only when necessary and not killing my battery when the laptop is unplugged?

Nvidia has not provided equivalent driver functionality for Linux and have thus caused (and are still causing) many years of unnecessary pain and suffering to the Linux community. If it was a technical issue with the Linux code then that could have been solved by now. Nvidia is still acting like the ‘old’, arrogant Microsoft from the 2000’s (and all credit to Microsoft - consensus now is that they have drastically improved by embracing the community, open-source and other platforms such as Linux).

One example of where the Linux community has been ignored repeatedly for years is on this Nvidia forum where an Nvidia developer explicitly stated “NVIDIA has no plans to support PRIME render offload at this time” meaning that they won’t provide functionality to allow the Integrated GPU to perform the rendering and allow it to effectively pass-through to the display ports attached to the Nvidia GPU for display to an external monitor. In 2016, that statement spawned further discussion on this thread on the Nvidia forum where Nvidia continues today to ignore members of the Linux community.

What’s This About?

Regardless of all the challenges, my desire to run Linux on this laptop still is greater than my desire to run Windows… Below is a comparison of some of the more interesting graphics driver configurations I tried in an effort to find one that gets close to fulfilling my forementioned goals.

Common Test Configuration

All tests were run with the same common configuration:

- Metabox Prime P650HP laptop

- Nvidia GeForce GTX 1060M 6G GDDR5

- Antergos (Arch) Linux running KDE Plasma

- Laptop screen at 50% brightness

- Screensavers off

- Minimal applications running

- CPU average < 2% unless otherwise indicated

Test Measurements

Some of the measurements are missing, and that is either because they were not available at the time or I ended the test early due to the result being sufficiently obvious for my purpose.

Battery usage percentage and time estimate on Linux was measured using the Battery Time widget. On Windows the usual power/battery icon in the notification area in the taskbar was used.

Windows - Baseline - Integrated Intel Graphics Only

- No external monitor

- Nvidia “off”

- Windows “Balanced” power plan

| Duration (minutes) | Battery Charge (%) | Calculated Time Left (h.mm) |

|---|---|---|

| 0 | 100 | ? |

| 10 | 96 | ? |

| 12 | 95 | 3.39 |

| 15 | 94 | ? |

| 20 | 92 | 3.33 |

Linux - Baseline - Integrated Intel Graphics Only

- No external monitor

- i915 only - Nvidia “off”

- Bumblebee installed

| Duration (minutes) | Battery Charge (%) | Calculated Time Left (h.mm) |

|---|---|---|

| 0 | 100 | ? |

| 10 | 94 | 2.47 |

| 12 | 93 | 2.49 |

| 15 | 92 | 2.44 |

| 20 | 88 | 2.39 |

Windows - Display Extended to External Monitor

- External monitor connected via the Nvidia graphics card’s HDMI out

- Windows “Balanced” power plan

| Duration (minutes) | Battery Charge (%) | Calculated Time Left (h.mm) |

|---|---|---|

| 0 | 100 | ? |

| 10 | 94 | ? |

| 12 | 92 | ? |

| 15 | 90 | 2.08 |

| 20 | ? | ? |

Linux - Display Extended to External Monitor (intel-virtual-output)

- External monitor connected via the Nvidia graphics card’s HDMI out

- i915 driver loaded and running the laptop screen

- Nvidia drivers loaded for the output ports

- [Bumblebee and

intel-virtual-output](wired directly into the Nvidia card) running – causing an average of ~6% CPU with the clock frequency regularly changing in increments between 1.5 and 3.2 GHz

| Duration (minutes) | Battery Charge (%) | Calculated Time Left (h.mm) |

|---|---|---|

| 0 | 100 | ? |

| 10 | 90 | 1.41 |

| 12 | 88 | 1.30 |

| 15 | 86 | 1.31 |

| 20 | 79 | 1.19 |

Linux - Display Extended to External Monitor (Nvidia driver - Max Battery Savings)

- External monitor connected via the Nvidia graphics card’s HDMI out

- Nvidia drivers loaded only

- PerfLevelSrc = 0x3333 (adaptive clock frequency for both battery and AC power)

- PowerMizerDefault = 0x3 (maximum battery power saving)

| Duration (minutes) | Battery Charge (%) | Calculated Time Left (h.mm) |

|---|---|---|

| 0 | 100 | ? |

| 10 | 92 | 1.58 |

| 12 | 90 | 1.49 |

| 15 | 89 | 1.48 |

| 20 | 84 | 1.44 |

Analysis

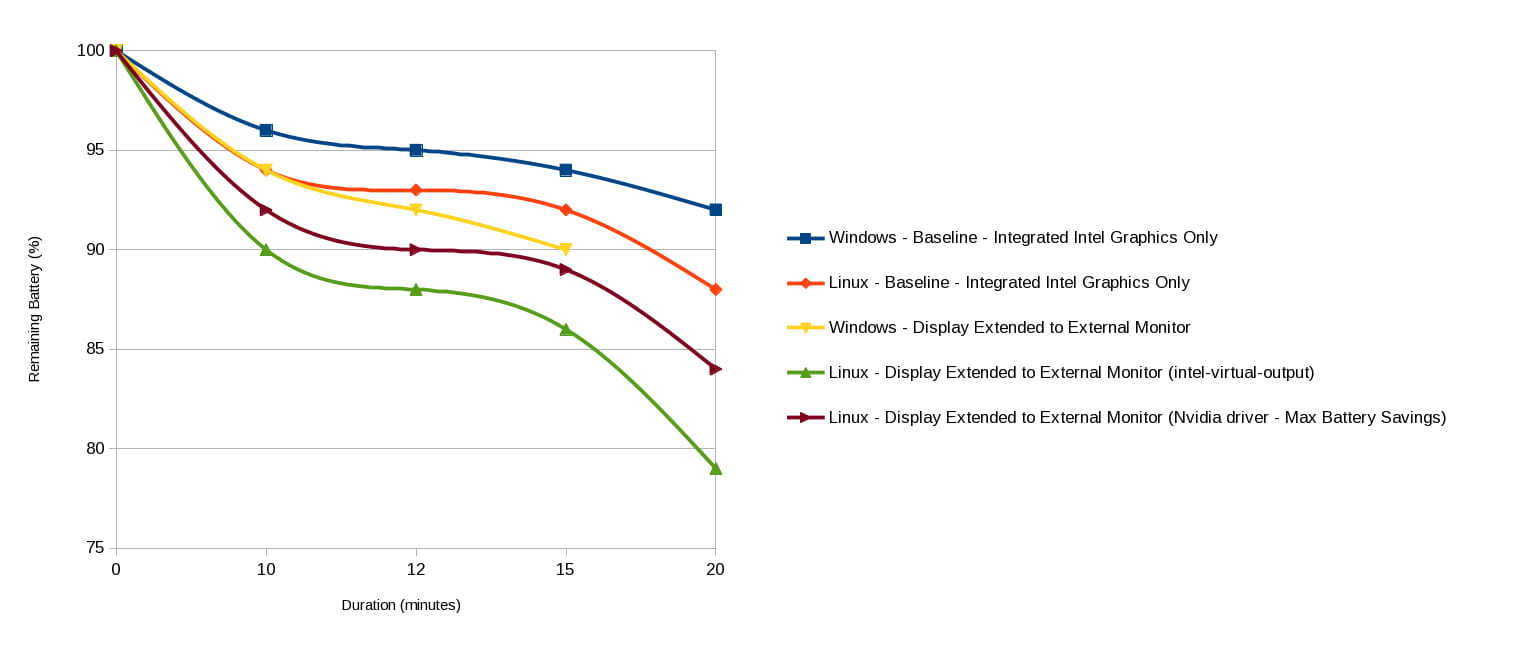

Here is a visual of the above measurements, with the Y-Axis showing the measured battery charge percentage and the X-Axis showing the Duration in minutes.

Immediately one can see a difference in the baseline power usage between Linux and Windows when only the Integrated Intel Graphics chip is being used. This is evidenced by the difference in battery charge at 15 minutes, where Windows was 94% battery charge while Linux was 92%. The difference is attributable to the fact that I had not yet tuned this installation of Linux for power savings – so that means the actual battery time I will experience after the tuning will be better than measured here.

The baseline of Windows extending the display to an external monitor forces Windows to turn on the Nvidia GPU – in a very similar way to when Linux is running the proprietary Nvidia drivers with maximum power savings (with or without extending the display to an external monitor).

This same Linux maximum power saving option also is significantly better than the Linux configuration where the Bumblebee intel-virtual-output approach is churning CPU power and the fan in order to extend the display to an external monitor and copy over the video buffers.

Performance Testing

Other tests conducted compared the performance of the PowerMizerDefault 0x2 balanced/medium performance mode versus 0x3 maximum power savings mode. Scrolling through a web page was noticeably slower on the maximum power savings mode.

Testing showed that my battery actually lasted more than an hour when the Nvidia drivers were configured with the 0x3 maximum power saving mode.

Screen updates in the 0x2 balanced performance mode did not produce any noticeable screen drawing lag or tearing during normal work usage. This balanced performance mode however would not be acceptable for gaming.

On the maximum performance 0x1 setting, the Nvidia GPU regularly entered Performance Level 4 (even just by moving the mouse). With the higher graphics clock and memory transfer frequency of that Performance Level, the temperature of the GPU was constantly higher – causing the normally passively cooled GPU’s fan to kick into high gear and make excessive noise and heat while the machine was simply idling.

Result

The increased complexity for users, compromises in performance, compatibility issues and incomplete support (such as not working with Vulkan) required by the open-source efforts is not appetizing.

The analysis above has shown that most of my goals can be achieved simply by using the proprietary Nvidia drivers and keeping the Nvidia graphics turned on with:

- the PowerMizer modes:

- On AC power, the PowerMizerDefaultAC 0x2 medium performance / balanced power saving mode which provides ample speed for work activities

- On battery, the PowerMizerDefault 0x3 maximum power savings mode which ensures that I can use my laptop unplugged in a long meeting – even when projecting to an external display

- PerfLevelSrc of 0x3333 to ensure the Nvidia GPU is adaptively changing the clock frequency and allowing it to drop as low as possible to save power.

The only unfulfilled goal is the “ability to use the high performance of the Nvidia GPU (e.g. for Data Science) without being forced to reboot or log off/on”.

This 1060M graphics card unfortunately does not support setting a power limit. If it did, I possibly could set the PowerMizerDefaultAC permanently to 0x1 high performance mode and as desired use the command line utility nvidia-smi with the -pl parameter to set the maximum power limit – without a log off/on or restart.

How to Configure the Power Savings Modes?

In order to configure Linux and the proprietary Nvidia graphics drivers to run with my chosen power savings mode, perform the following procedure.

1. Configure an X11 configuration file for Nvidia driver

Configure the Nvidia driver to use specific PowerMizer settings.

$ sudo nano /etc/X11/xorg.conf.d/20-nvidia.conf

1Section "Module"

2 Load "modesetting"

3EndSection

4

5Section "Device"

6 Identifier "Device NVIDIA GPU"

7 Driver "nvidia"

8 BusID "PCI:1:0:0"

9 Option "AllowEmptyInitialConfiguration"

10

11 # For overclocking - only when PowerMizerDefaultAC=0x1

12 Option "Coolbits" "31"

13

14 # Max power savings on battery + High performance on AC

15 #Option "RegistryDwords" "PowerMizerEnable=0x1; PerfLevelSrc=0x3333; PowerMizerDefault=0x3; PowerMizerDefaultAC=0x1"

16

17 # Max power savings on battery + Balanced performance on AC

18 Option "RegistryDwords" "PowerMizerEnable=0x1; PerfLevelSrc=0x3333; PowerMizerDefault=0x3; PowerMizerDefaultAC=0x2"

19EndSection

Know Your BusID

You may need to change the BusID to match your PCI configuration. To find your BusID, execute:

$ lspci | grep VGA

100:02.0 VGA compatible controller: Intel Corporation Device 591b (rev 04)

201:00.0 VGA compatible controller: NVIDIA Corporation GP106M [GeForce GTX 1060 Mobile 6GB] (rev a1)

Then find the first number that corresponds to your Nvidia card. In my case above, the value is “01”. Removing any padded zeros, then format that number as PCI:<that number>:0:0 – i.e. PCI:1:0:0.

2. Ensure ACPI is working

The Nvidia driver relies on ACPI in order to know when the laptop switches from running on power to running on battery and vice versa.

To check if the ACPI daemon is running, execute the command:

$ systemctl is-enabled acpid

If it is not enabled:

$ sudo pacman -S acpi

If you looked in the log file you would see an expected error at this point.

$ cat ~/.local/share/xorg/Xorg.0.log | grep ACPI

(WW) Open ACPI failed (/var/run/acpid.socket) (No such file or directory)

And if you tried the following command there also would be an error.

$ acpi_listen

acpi_listen: can't open socket /var/run/acpid.socket: No such file or directory

$ systemctl enable acpid.service

$ systemctl start acpid.service

Test ACPI

$ acpi_listen

Now, pull the power cord!

ac_adapter ACPI0003:00 00000080 00000000

battery PNP0C0A:00 00000081 00000001

battery PNP0C0A:00 00000080 00000001

processor LNXCPU:00 00000080 00000009

processor LNXCPU:01 00000080 00000009

processor LNXCPU:02 00000080 00000009

processor LNXCPU:03 00000080 00000009

processor LNXCPU:04 00000080 00000009

processor LNXCPU:05 00000080 00000009

processor LNXCPU:06 00000080 00000009

processor LNXCPU:07 00000080 00000009

processor LNXCPU:00 00000081 00000000

processor LNXCPU:01 00000081 00000000

processor LNXCPU:02 00000081 00000000

processor LNXCPU:03 00000081 00000000

processor LNXCPU:04 00000081 00000000

processor LNXCPU:05 00000081 00000000

processor LNXCPU:06 00000081 00000000

processor LNXCPU:07 00000081 00000000

And reconnect the power cord:

battery PNP0C0A:00 00000081 00000001

ac_adapter ACPI0003:00 00000080 00000001

battery PNP0C0A:00 00000081 00000001

battery PNP0C0A:00 00000081 00000001

battery PNP0C0A:00 00000080 00000001

processor LNXCPU:00 00000080 00000000

processor LNXCPU:01 00000080 00000000

processor LNXCPU:02 00000080 00000000

processor LNXCPU:03 00000080 00000000

processor LNXCPU:04 00000080 00000000

processor LNXCPU:05 00000080 00000000

processor LNXCPU:06 00000080 00000000

processor LNXCPU:07 00000080 00000000

processor LNXCPU:00 00000081 00000000

processor LNXCPU:01 00000081 00000000

processor LNXCPU:02 00000081 00000000

processor LNXCPU:03 00000081 00000000

processor LNXCPU:04 00000081 00000000

processor LNXCPU:05 00000081 00000000

processor LNXCPU:06 00000081 00000000

processor LNXCPU:07 00000081 00000000

battery PNP0C0A:00 00000081 00000001

You can also use nvidia-settings to see the current power source by checking the GPUPowerSource read-only parameter (0 = AC power, 1 = battery)

$ nvidia-settings -q GPUPowerSource -t

1

Understanding PowerMizer

What is PerfLevelSrc?

PerfLevelSrc specifies the clock frequency control strategy, in the following hexadecimal format

0x<Byte 01 - battery source strategy><Byte 02 - AC power source strategy>

where:

- Byte 01 = clock policy on battery

- Byte 02 = clock policy on AC

The following values are valid for the bytes:

- 0x22 = fixed frequency

- 0x33 = adaptive frequency

The adaptive setting for both battery and AC power (0x3333) is always desired for power savings so that the GPU can scale down to the smallest possible clock frequency at any point in time – instead of remaining at the maximum clock frequency of the power mode you specify.

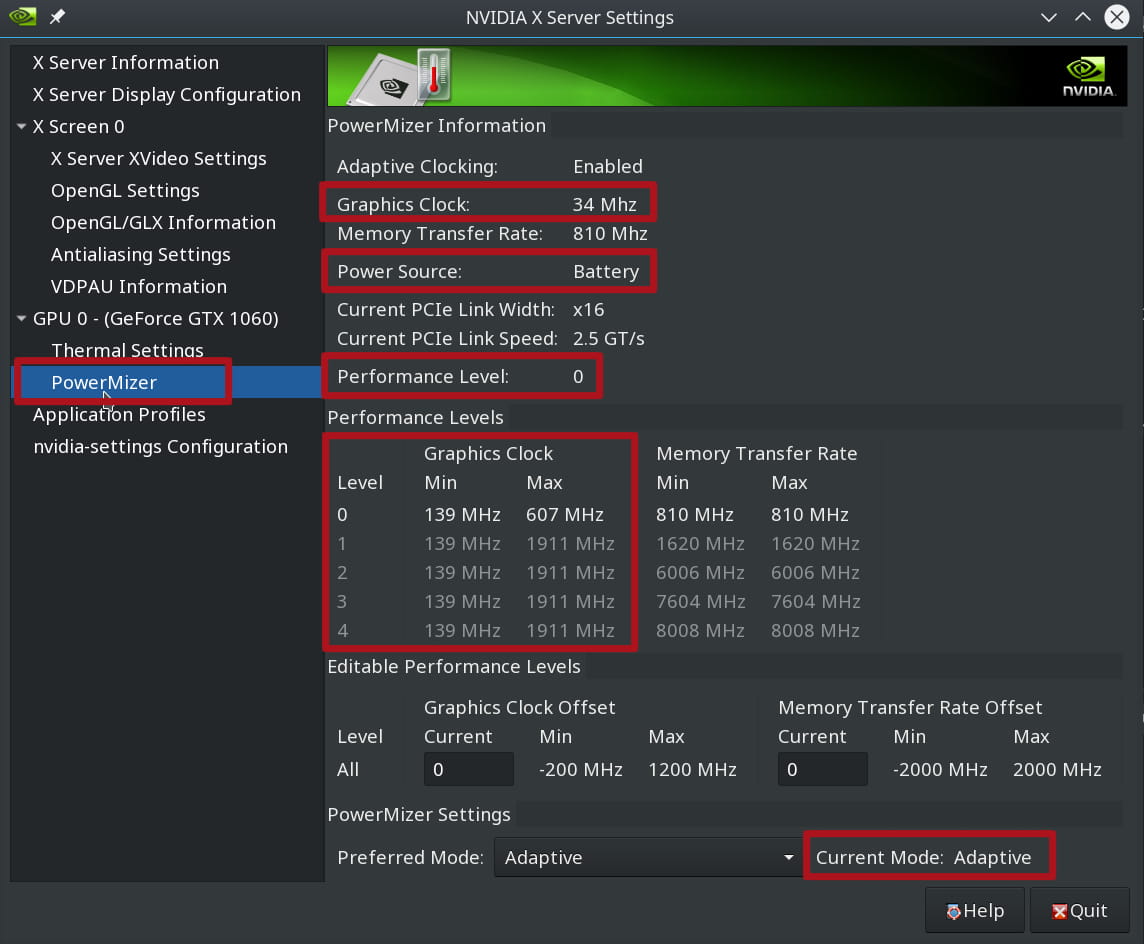

To see the different Performance Levels and their minimum and maximum clock speeds, you can use the Nvidia X Server Settings application.

What is interesting in the image above is that while on battery the instantaneous clock speed dropped to 34 Mhz while it was on Performance Level 0 – which has a minimum clock speed of 139 Mhz. When on AC power, the instantaneous clock speed never dropped below the 139 Mhz.

Also of note, when on battery and running PowerMizer 0x3 maximum power savings, I could not make the Performance Level change from Level 0, nor could I make the clock speed go above 151 Mhz - no matter whether a second monitor was connected or I had animated graphics applications running.

As soon as I plugged in the AC power however, the clock speed immediately jumped to 569 Mhz while on Performance Level 0 – even when running some animated graphics applications.

Another way to look at the Performance Levels is via the command line with $ nvidia-settings -q GPUPerfModes which for me resulted in:

1Attribute 'GPUPerfModes' (MachineName:0.0):

2

3perf=0, nvclock=139, nvclockmin=139, nvclockmax=607, nvclockeditable=1, memclock=405, memclockmin=405, memclockmax=405, memclockeditable=1, memTransferRate=810, memTransferRatemin=810, memTransferRatemax=810, memTransferRateeditable=1 ;

4

5perf=1, nvclock=139, nvclockmin=139, nvclockmax=1911, nvclockeditable=1, memclock=810, memclockmin=810, memclockmax=810, memclockeditable=1, memTransferRate=1620, memTransferRatemin=1620, memTransferRatemax=1620, memTransferRateeditable=1 ;

6

7perf=2, nvclock=139, nvclockmin=139, nvclockmax=1911, nvclockeditable=1, memclock=3003, memclockmin=3003, memclockmax=3003, memclockeditable=1, memTransferRate=6006, memTransferRatemin=6006, memTransferRatemax=6006, memTransferRateeditable=1 ;

8

9perf=3, nvclock=139, nvclockmin=139, nvclockmax=1911, nvclockeditable=1, memclock=3802, memclockmin=3802, memclockmax=3802, memclockeditable=1, memTransferRate=7604, memTransferRatemin=7604, memTransferRatemax=7604, memTransferRateeditable=1 ;

10

11perf=4, nvclock=139, nvclockmin=139, nvclockmax=1911, nvclockeditable=1, memclock=4004, memclockmin=4004, memclockmax=4004, memclockeditable=1, memTransferRate=8008, memTransferRatemin=8008, memTransferRatemax=8008, memTransferRateeditable=1

What is PowerMizerDefault and PowerMizerDefaultAC ?

Power saving behaviours for running on battery and AC power sources are specified individually.

PowerMizerDefault is the power setting when running on battery.

PowerMizerDefaultAC is the power setting when running on AC power.

There are three PowerMizer modes that can be specified for PowerMizerDefault and PowerMizerDefaultAC:

- 0x1 - Maximum performance

- 0x2 - Balanced / medium performance

- 0x3 - Maximum power saving

Note: the Performance Levels do NOT equate to the PowerMizer mode settings! With my Nvidia GPU:

- 0x3 maximum power savings forces the GPU to live in Performance Level 0

- 0x2 medium performance forces the GPU to live between Performance Level 0 and 1 and quickly jump to Level 1 upon any major user interaction or graphic requirement

- 0x1 maximum performance forces the GPU to scale any Performance Level and quickly jump straight to Level 4 upon any major user interaction or graphic requirement

Bookmarks

- Arch Wiki - Prime

- Arch Wiki - Optimus

- Arch Wiki - NVIDIA/Tip and tricks

- Nvidia driver performance and power saving hints

- Forcing low-power mode with Nvidia cards on Linux

- Notes from Nvidia about Prime

Credits

- Linux penguin mascot originally drawn by Larry Ewing using The GIMP. See http://isc.tamu.edu/~lewing/linux/.